Open-Source Real-Time Processing for Physical AI

Holoscan is an open-source library for building multi-modal, multi-rate processing pipelines with accelerated I/O and TensorRT-powered inference in Python or C++

GitHub Download (deb) Download (PyPI) Connect with the Community Discord Forums

Build Complex Multi-Modal,

Multi-Rate Applications

Easy-to-create operators

Implement operators in Python or C++, compose them in a graph, and iterate quickly with clear APIs.

Graph-based natural concurrency

Define dataflow graphs and let the runtime schedule work across operators — no manual threading or locks.

Low memory footprint

Multi-threaded single-process multi-threaded model and efficient message passing so you fit real-time pipelines on edge devices.

Deterministic Scheduling and Resource Allocation

CUDA Green Context resource isolation

Isolate GPU work per operator for better resource control and to avoid interference between pipeline stages.

Introspection, logging, and timing analysis

Built-in tools to trace execution, log events, and analyze timing so you can tune and debug pipelines.

GPU Streaming Graphs

Run entire pipelines on the GPU with CUDA graphs and streaming; minimize CPU involvement for predictable latency.

Low-Latency CPU-Offloaded Accelerated I/O

Holoscan Sensor Bridge support

Ingest high-bandwidth sensor and network streams with HSB and Ethernet support, ready for real-time processing.

Rivermax, DPDK, and GPUNetIO examples

Reference implementations and examples using industry frameworks for low-latency, CPU-offloaded I/O.

Designed for 100+ Gbps sensor workloads

Architected to handle extreme throughput so your pipelines keep up with multi-Gbps sensors and feeds.

$ pip install holoscan-cu12

$ python

Python 3.12.3

>>> from holoscan.core import Application

>>> app = Application()

>>> app.run()

█Install in seconds

Holoscan offers both Python and C++ packages, as well as NGC containers, Conda packages, and Yocto recipes.

The full installation guide (NGC containers, Conda, platform-specific steps) is available in the SDK Installation docs.

- Yocto/OpenEmbedded: For Yocto-based deployments, check out the meta-tegra-holoscan layer.

Define your own operators

Operators — the nodes in your compute graph — support any number of input or output ports. Define them in your application or package them into reusable libraries.

Call into CuPy, MATX, or any other accelerated libraries. Holoscan uses the industry-standard DLPack format for tensors.

class ResampleTwoThirdsOp(Operator):

def setup(self, spec: OperatorSpec):

spec.input("in")

spec.output("out")

def compute(self, op_input, op_output, context):

sig = op_input.receive("in")

resample_sig = cusignal.resample_poly(sig, 2, 3)

op_output.emit(resample_sig, "out")

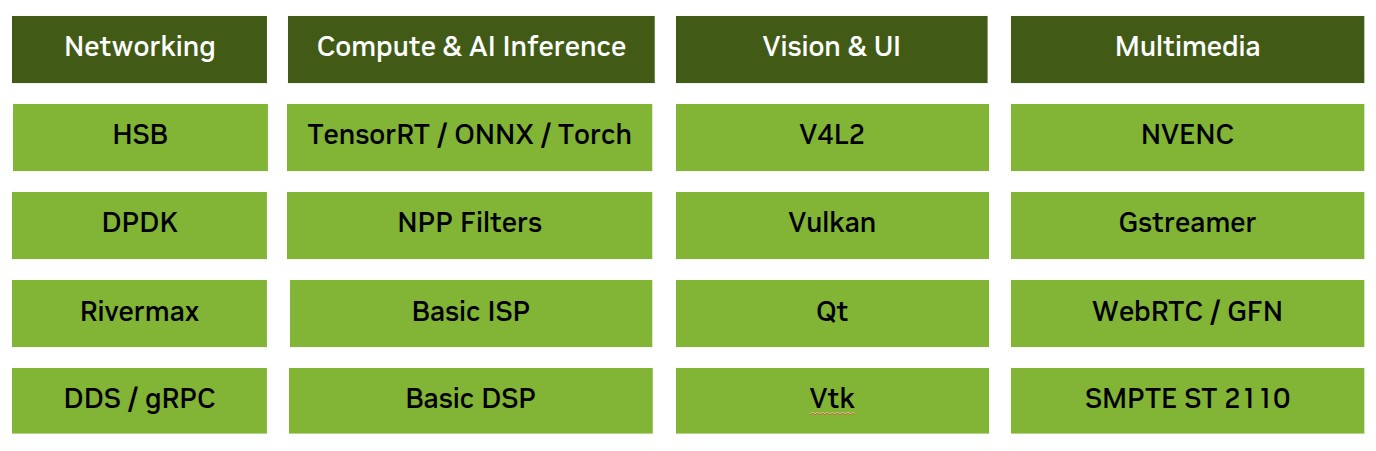

Leverage built-in operators

Browse our catalog, of built-in operators for I/O, inference, and viz, including TensorRT-based inference, EtherCAT motor control, and GeForce Now streaming server and client operators.

Plus, Holoscan plays well with others: bridge to ROS2, GStreamer, WebRTC (server and client), and DDS.

Wire up your graph

Create instances of your operators and any resources you need, and wire them together in your application. Each port on an operator has conditions that let the scheduler determine when to run the operator’s compute() method.

Holoscan provides both single-threaded and multi-threaded schedulers that evaluate your graph and schedule operations. Built-in resource management lets your operators use allocators, clocks, and other shared resources for memory and execution control.

from holoscan.core import Application

from holoscan.operators import FormatConverterOp, HolovizOp, V4L2VideoCaptureOp

from holoscan.resources import RMMAllocator

class WebcamViewer(Application):

def compose(self):

source = V4L2VideoCaptureOp(self, pass_through=True)

fmt = FormatConverterOp(self, in_dtype="yuyv", out_dtype="rgb888", pool=RMMAllocator(self))

viz = HolovizOp(self)

self.add_flow(source, fmt)

self.add_flow(fmt, viz, {("tensor", "receivers")})

app = WebcamViewer()

app.run()Holoscan Sensor Bridge

Low-latency, hardware-accelerated Ethernet for sensors and actuators

Low-latency Ethernet bridging

Enumerate, configure, and stream data, bridging Holoscan to any camera, radar, lidar, or other sensor or actuator.

Embedded open-source stack

Lightweight Verilog or software IP that integrates into any FPGA, MCU, SoC, or ASIC — with no external DRAM — plus, PC-based emulation for hardware-in-the-loop testing.

Full Thor support

Holoscan Sensor Bridge is the only hardware-accelerated Ethernet solution for Thor, and ships with open-source userspace drivers and example pipelines.

From the Blog

-

What's New in Holoscan 4.3

Holoscan 4.3 smooths operator authoring, hardens Holoviz display synchronization, adds clearer 10-bit visualization warnings, and fixes a MetadataDictionary emit crash.

-

What's New in Holoscan 4.2

GPU-resident graphs now support full DAGs (fan-out, fan-in, and parallel branches), the stack aligns with NVIDIA Production Branch 6, MatX is exported for downstream CMake projects, and Holohub adds new reference apps alongside the release.

-

Announcing Holoscan SDK 4.0

Holoscan 4.0 introduces a GPU-resident, distributed runtime for building real-time Physical AI systems that ingest high-bandwidth sensor data, run AI and signal processing pipelines, and take deterministic actions in the physical world — enabling developers to build Raw-to-Insights sensor platforms entirely in software.

Holohub: Community Reference Apps

Operators, workflows, examples, and blueprints for building processing pipelines with Holoscan